What’s New

Contents

What’s New#

climpred v2.3.0 (2021-11-25)#

Note

As both maintainers moved out of academia into industry, this will be probably the last release for a while. If you are interested in maintaining climpred, please ping us.

Bug Fixes#

Fix

reference="persistence"for resampledinit. (GH#730, GH#731) Aaron Spring.HindcastEnsemble.verify()(comparison="m2o", reference="uninitialized", dim="init"). (GH#735, GH#731) Aaron Spring.HindcastEnsemble.remove_bias()does not drop single itemleaddimension. (GH#771, GH#773) Aaron Spring.

New Features#

Refactored

HindcastEnsemble.bootstrap()andPerfectModelEnsemble.bootstrap()based onHindcastEnsemble.verify()andPerfectModelEnsemble.verify(), which makes them more comparable.pers_sigis removed. Alsoreference=["climatology", "persistence"]skill has variance ifresample_dim='init'.bootstraprelies on eitherset_option(resample_skill_func="..."):"loop": callsclimpred.bootstrap.resample_skill_loop()which loops over iterations and callsverifyevery single time. Most understandable and stable, but slow."exclude_resample_dim_from_dim": callsclimpred.bootstrap.resample_skill_exclude_resample_dim_from_dim()which callsverify(dim=dim_without_resample_dim), resamples overresample_dimand then takes a mean overresample_dimif indim. EnablesHindcastEnsemble.bootstrap(resample_dim="init", alignment="same_verifs"). Fast alternative forresample_dim="init"."resample_before": callsclimpred.bootstrap.resample_skill_resample_before()which resamplesiterationdimension and then callsverifyvectorized. Fast alternative forresample_dim="member"."default":climpreddecides which to use

(relates to GH#375, GH#731) Aaron Spring.

climpred.set_option(resample_skill_func='exclude_resample_dim_from_dim')allowsHindcastEnsemble.bootstrap(alignment='same_verifs', resample_dim='init'). Does not work forpearson_r-derived metrics. (GH#582, GH#731) Aaron Spring.climpred.utils.convert_init_lead_to_valid_time_lead()convertsdata(init, lead)todata(valid_time, lead)to visualize predictability barrier and the reverseclimpred.utils.convert_valid_time_lead_to_init_lead(). (GH#774, GH#775, GH#783) Aaron Spring.

Internals/Minor Fixes#

Refactor

asvbenchmarking. Addrun-benchmarkslabel toPRto runasvvia Github Actions. (GH#664, GH#718) Aaron Spring.Remove

ipythonfromrequirements.txt. (GH#720) Aaron Spring.Calculating

np.isinonasi8instead ofxr.CFTimeIndexspeeds upHindcastEnsemble.verify()andHindcastEnsemble.bootstrap()with large number of inits. (GH#414, GH#724) Aaron Spring.Add option

bootstrap_resample_skill_funcfor they what skill is resampled inHindcastEnsemble.bootstrap()andPerfectModelEnsemble.bootstrap(), seeset_options. (GH#731) Aaron Spring.Add option

resample_iterations_functo decide whetherxskillscore.resampling.resample_iterations()orxskillscore.resampling.resample_iterations()should be used, seeset_options. (GH#731) Aaron Spring. - Add optionbootstrap_uninitialized_from_iterations_meanto exchangeuninitializedskill with the iteration meanuninitialized. Defaults to False., seeset_options. (GH#731) Aaron Spring.alignment="same_verifs"will not result inNaN``s in ``valid_time. (GH#777) Aaron Spring.HindcastEnsemble.plot_alignment()(return_xr=True)containsvalid_timecoordinate. (GH#779) Aaron Spring.

Bug Fixes#

Fix

PerfectModel_persistence_from_initialized_lead_0=Truewith multiple references. (GH#732, GH#733) Aaron Spring.

Documentation#

Add verify dim example showing how

HindcastEnsemble.verify()andPerfectModelEnsemble.verify()are sensitive todimand howdimanswers different research questions. (GH#740) Aaron Spring.

climpred v2.2.0 (2021-12-20)#

Bug Fixes#

Fix when creating

valid_timefromlead.attrs["units"]in["seasons", "years"]with multi-month stride ininit. (GH#698, GH#700) Aaron Spring.Fix

seasonality="season"inreference="climatology". (GH#641, GH#703) Aaron Spring.

New Features#

Upon instantiation,

PredictionEnsemblegenerates new 2-dimensional coordinatevalid_timeforinitializedfrominitandlead, which is matched withtimefromverificationduring alignment. (GH#575, GH#675, GH#678) Aaron Spring.

>>> hind = climpred.tutorial.load_dataset("CESM-DP-SST")

>>> hind.lead.attrs["units"] = "years"

>>> climpred.HindcastEnsemble(hind).get_initialized()

<xarray.Dataset>

Dimensions: (lead: 10, member: 10, init: 64)

Coordinates:

* lead (lead) int32 1 2 3 4 5 6 7 8 9 10

* member (member) int32 1 2 3 4 5 6 7 8 9 10

* init (init) object 1954-01-01 00:00:00 ... 2017-01-01 00:00:00

valid_time (lead, init) object 1955-01-01 00:00:00 ... 2027-01-01 00:00:00

Data variables:

SST (init, lead, member) float64 ...

Allow

leadasfloatalso ifcalendar="360_day"orlead.attrs["units"]not in["years","seasons","months"]. (GH#564, GH#675) Aaron Spring.Implement

HindcastEnsemble.generate_uninitialized()resampling years without replacement frominitialized. (GH#589, GH#591) Aaron Spring.Implement Logarithmic Ensemble Skill Score

_less(). (GH#239, GH#687) Aaron Spring.HindcastEnsemble.remove_seasonality()andPerfectModelEnsemble.remove_seasonality()remove the seasonality of allclimpreddatasets. (GH#530, GH#688) Aaron Spring.Add keyword

groupbyinHindcastEnsemble.verify(),PerfectModelEnsemble.verify(),HindcastEnsemble.bootstrap()andPerfectModelEnsemble.bootstrap()to group skill by initializations seasonality. (GH#635, GH#690) Aaron Spring.

>>> import climpred

>>> hind = climpred.tutorial.load_dataset("NMME_hindcast_Nino34_sst")

>>> obs = climpred.tutorial.load_dataset("NMME_OIv2_Nino34_sst")

>>> hindcast = climpred.HindcastEnsemble(hind).add_observations(obs)

>>> # skill for each init month separated

>>> skill = hindcast.verify(

... metric="rmse",

... dim="init",

... comparison="e2o",

... skipna=True,

... alignment="maximize",

... groupby="month",

... )

>>> skill

<xarray.Dataset>

Dimensions: (month: 12, lead: 12, model: 12)

Coordinates:

* lead (lead) float64 0.0 1.0 2.0 3.0 4.0 5.0 6.0 7.0 8.0 9.0 10.0 11.0

* model (model) object 'NCEP-CFSv2' 'NCEP-CFSv1' ... 'GEM-NEMO'

skill <U11 'initialized'

* month (month) int64 1 2 3 4 5 6 7 8 9 10 11 12

Data variables:

sst (month, lead, model) float64 0.4127 0.3837 0.3915 ... 1.255 3.98

>>> skill.sst.plot(hue="model", col="month", col_wrap=3)

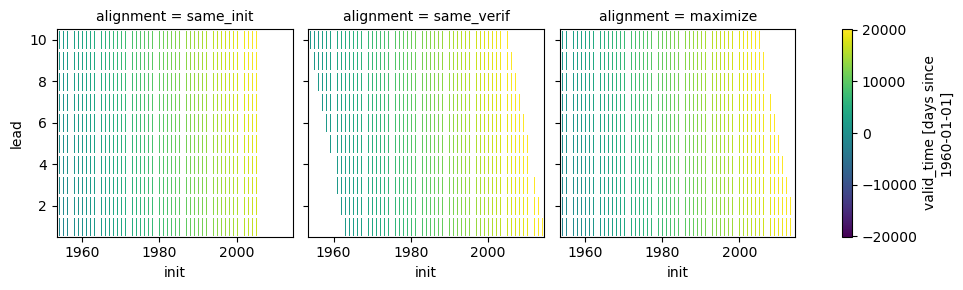

HindcastEnsemble.plot_alignment()shows how forecast and observations are aligned based on the alignment keyword. This may help understanding which dates are matched for the differentalignmentapproaches. (GH#701, GH#702) Aaron Spring.In [1]: from climpred.tutorial import load_dataset In [2]: hindcast = climpred.HindcastEnsemble( ...: load_dataset("CESM-DP-SST") ...: ).add_observations(load_dataset("ERSST")) ...: In [3]: hindcast.plot_alignment(edgecolor="w") Out[3]: <xarray.plot.facetgrid.FacetGrid at 0x7f97810fc5b0>

Add

attrsto newcoordinatescreated byclimpred. (GH#695, GH#697) Aaron Spring.Add

seasonality="weekofyear"inreference="climatology". (GH#703) Aaron Spring.Compute

reference="persistence"inPerfectModelEnsemblefrominitializedfirstleadifset_options(PerfectModel_persistence_from_initialized_lead_0=True)(Falseby default) usingcompute_persistence_from_first_lead(). (GH#637, GH#706) Aaron Spring.

Internals/Minor Fixes#

Reduce dependencies. (GH#686) Aaron Spring.

Add typing. (GH#685, GH#692) Aaron Spring.

refactor

add_attrsintoHindcastEnsemble.verify()andHindcastEnsemble.bootstrap(). Now all keywords are captured in the skill dataset attributes.attrs. (GH#475, GH#694) Aaron Spring.docstrings formatting with blackdocs. (GH#708) Aaron Spring.

Documentation#

Refresh all docs with

sphinx_book_themeandmyst_nb. (GH#707, GH#708, GH#709, GH#710) Aaron Spring.

climpred v2.1.6 (2021-08-31)#

Adding on to v2.1.5, more bias reduction methods wrapped from

xclim

are implemented.

Bug Fixes#

Fix

results="p"inHindcastEnsemble.bootstrap()andPerfectModelEnsemble.bootstrap()whenreference='climatology'. (GH#668, GH#670) Aaron Spring.HindcastEnsemble.remove_bias()forhowin["modified_quantile", "basic_quantile", "gamma_mapping", "normal_mapping"]from bias_correction takes allmemberto create model distribution. (GH#667) Aaron Spring.

New Features#

allow more bias reduction methods wrapped from xclim in

HindcastEnsemble.remove_bias():how="EmpiricalQuantileMapping":xclim.sdba.adjustment.EmpiricalQuantileMappinghow="DetrendedQuantileMapping":xclim.sdba.adjustment.DetrendedQuantileMappinghow="PrincipalComponents":xclim.sdba.adjustment.PrincipalComponentshow="QuantileDeltaMapping":xclim.sdba.adjustment.QuantileDeltaMappinghow="Scaling":xclim.sdba.adjustment.Scalinghow="LOCI":xclim.sdba.adjustment.LOCI

These methods do not respond to

OPTIONS['seasonality']like the other methods. Providegroup="init.month"to group by month orgroup='init'to skip grouping. Providegroup=Noneor skipgroupto useinit.{OPTIONS['seasonality']}. (GH#525, GH#662, GH#666, GH#671) Aaron Spring.

climpred v2.1.5 (2021-08-12)#

While climpred has used in the

ASP summer colloquium 2021,

many new features in HindcastEnsemble.remove_bias() were

implemented.

Breaking changes#

renamed

cross_validatetocv=FalseinHindcastEnsemble.remove_bias(). Only used whentrain_test_split='unfair-cv'. (GH#648, GH#655). Aaron Spring.

Bug Fixes#

Shift back

initbyleadafterHindcastEnsemble.verify(). (GH#644, GH#645) Aaron Spring.

New Features#

HindcastEnsemble.remove_bias()accepts new keywordtrain_test_split='fair/unfair/unfair-cv'(defaultunfair) following Risbey et al. 2021. (GH#648, GH#655) Aaron Spring.allow more bias reduction methods in

HindcastEnsemble.remove_bias():how="additive_mean": correcting the mean forecast additively (already implemented)how="multiplicative_mean": correcting the mean forecast multiplicativelyhow="multiplicative_std": correcting the standard deviation multiplicatively

Wrapped from bias_correction:

how="modified_quantile": Bai et al. 2016how="basic_quantile": Themeßl et al. 2011how="gamma_mapping"andhow="normal_mapping": Switanek et al. 2017

HindcastEnsemble.remove_bias()now does leave-one-out cross validation when passingcv='LOO'andtrain_test_split='unfair-cv'.cv=Truefalls back tocv='LOO'. (GH#643, GH#646) Aaron Spring.Add new metrics

_spread()and_mul_bias()(GH#638) Aaron Spring.Add new tutorial datasets: (GH#651) Aaron Spring.

NMME_OIv2_Nino34_sstandNMME_hindcast_Nino34_sstwith monthly leadsObservations_GermanyandECMWF_S2S_Germanywith daily leads

Metadata from CF convenctions are automatically attached by cf_xarray. (GH#639, GH#656) Aaron Spring.

Raise warning when dimensions

time,initormemberare chunked to show user how to circumventxskillscorechunkingValueErrorwhen passing these dimensions asdiminHindcastEnsemble.verify()orHindcastEnsemble.bootstrap(). (GH#509, GH#658) Aaron Spring.Implement

PredictionEnsemble.chunks. (GH#658) Aaron Spring.

Documentation#

Speed up ENSO monthly example with IRIDL server-side preprocessing (see context) (GH#594, GH#633) Aaron Spring.

Add CITATION.cff. Please cite Brady and Spring, 2020. (GH) Aaron Spring.

Use

NMME_OIv2_Nino34_sstandNMME_hindcast_Nino34_sstwith monthly leads for bias reduction demonstratingHindcastEnsemble.remove_bias(). (GH#646) Aaron Spring.

climpred v2.1.4 (2021-06-28)#

New Features#

Allow

hours,minutesandsecondsaslead.attrs['units']. (GH#404, GH#603) Aaron Spring.Allow to set

seasonalityviaset_optionsto specify how to group inverify(reference='climatology'or inHindcastEnsemble.remove_bias(). (GH#529, GH#593, GH#603) Aaron Spring.Allow

weekofyearviadatetimeinHindcastEnsemble.remove_bias(), but not yet implemented inverify(reference='climatology'). (GH#529, GH#603) Aaron Spring.Allow more dimensions in

initializedthan inobservations. This is particular useful if you have forecasts from multiple models (in amodeldimension) and want to verify against the same observations. (GH#129, GH#528, GH#619) Aaron Spring.Automatically rename dimensions to

CLIMPRED_ENSEMBLE_DIMS["init","member","lead"] if CF standard_names in coordinate attributes match: (GH#613, GH#622) Aaron Spring."init":"forecast_reference_time""member":"realization""lead":"forecast_period"

If

leadcoordinate ispd.Timedelta,PredictionEnsembleconvertsleadcoordinate upon instantiation to integerleadand correspondinglead.attrs["units"]. (GH#606, GH#627) Aaron Spring.Require

xskillscore >= 0.0.20._rps()now works with differentcategory_edgesfor observations and forecasts, see daily ECMWF example. (GH#629, GH#630) Aaron Spring.Set options

warn_for_failed_PredictionEnsemble_xr_call,warn_for_rename_to_climpred_dims,warn_for_init_coords_int_to_annual,climpred_warningsviaset_options. (GH#628, GH#631) Aaron Spring.PredictionEnsembleacts likexarray.Datasetand understandsdata_vars,dims,sizes,coords,nbytes,equals,identical,__iter__,__len__,__contains__,__delitem__. (GH#568, GH#632) Aaron Spring.

Documentation#

Add documentation page about publicly available initialized datasets and corresponding `climpred examples <initialized-datasets.html>`_. (GH#510, GH#561, GH#600) Aaron Spring.

Add GEFS example for numerical weather prediction. (GH#602, GH#603) Aaron Spring.

Add subseasonal daily ECMWF example using climetlab to access hindcasts from ECMWF cloud. (GH#587, GH#603) Aaron Spring.

Add subseasonal daily S2S example accessing S2S output on IRIDL with a cookie and working with “on-the-fly” reforecasts with

hdatedimension. (GH#588, GH#593) Aaron Spring.Added example climpred on GPU. Running

PerfectModelEnsemble.verify()on GPU with cupy-xarray finishes 10x faster. (GH#592, GH#607) Aaron Spring.How to work with biweekly aggregates in

climpred, see daily ECMWF example. (GH#625, GH#630) Aaron Spring.

Internals/Minor Fixes#

Add weekly upstream CI, which raises issues for failures. Adapted from

xarray. Manually trigger bygit commit -m '[test-upstream]'. Skip climpred_testing CI bygit commit -m '[skip-ci]'(GH#518, GH#596) Aaron Spring.

climpred v2.1.3 (2021-03-23)#

Breaking changes#

New Features#

HindcastEnsemble.verify(),PerfectModelEnsemble.verify(),HindcastEnsemble.bootstrap()andPerfectModelEnsemble.bootstrap()accept referenceclimatology. Furthermore, referencepersistencealso allows probabilistic metrics (GH#202, GH#565, GH#566) Aaron Spring.Added new metric

_rocReceiver Operating Characteristic asmetric='roc'. (GH#566) Aaron Spring.

Bug fixes#

HindcastEnsemble.verify()andHindcastEnsemble.bootstrap()acceptdimaslist,set,tupleorstr(GH#519, GH#558) Aaron Spring.PredictionEnsemble.map()now does not fail silently when applying a function to allxr.DatasetsofPredictionEnsemble. Instead,UserWarning``s are raised. Furthermore, ``PredictionEnsemble.map(func, *args, **kwargs)applies only function to Datasets with matching dims ifdim="dim0_or_dim1"is passed as**kwargs. (GH#417, GH#437, GH#552) Aaron Spring._rpcwas fixed inxskillscore>=0.0.19and hence is not falsely limited to 1 anymore (GH#562, GH#566) Aaron Spring.

Internals/Minor Fixes#

Docstrings are now tested in GitHub actions continuous integration. (GH#545, GH#560) Aaron Spring.

Github actions now cancels previous commits, instead of running the full testing suite on every single commit. (GH#560) Aaron Spring.

PerfectModelEnsemble.verify()does not add climpred attributes to skill by default anymore. (GH#560) Aaron Spring.Drop

python==3.6support. (GH#573) Aaron Spring.Notebooks are now linted with nb_black using

%load_ext nb_blackor%load_ext lab_blackfor Jupyter notebooks and Jupyter lab. (GH#526, GH#572) Aaron Spring.Reduce dependencies to install climpred. (GH#454, GH#572) Aaron Spring.

Examples from documentation available via Binder. Find further examples in the

examplesfolder. (GH#549, GH#578) Aaron Spring.Rename branch

mastertomain. (GH#579) Aaron Spring.

climpred v2.1.2 (2021-01-22)#

This release is the fixed version for our Journal of Open Source Software (JOSS)

article about climpred, see review.

New Features#

Function to calculate predictability horizon

predictability_horizon()based on condition. (GH#46, GH#521) Aaron Spring.

Bug fixes#

PredictionEnsemble.smooth()now carrieslead.attrs(GH#527, pr:521) Aaron Spring.PerfectModelEnsemble.verify()now works withreferencesalso for geospatial inputs, which returnedNaNbefore. (GH#522, pr:521) Aaron Spring.PredictionEnsemble.plot()now shifts composite lead frequencies likedays,pentads,seasonscorrectly. (GH#532, GH#533) Aaron Spring.Adapt to

xesmf>=0.5.2for spatial xesmf smoothing. (GH#543, GH#548) Aaron Spring.HindcastEnsemble.remove_bias()now carries attributes. (GH#531, GH#551) Aaron Spring.

climpred v2.1.1 (2020-10-13)#

Breaking changes#

This version introduces a lot of breaking changes. We are trying to overhaul

climpred to have an intuitive API that also forces users to think about methodology

choices when running functions. The main breaking changes we introduced are for

HindcastEnsemble.verify() and

PerfectModelEnsemble.verify(). Now, instead of assuming

defaults for most keywords, we require the user to define metric, comparison,

dim, and alignment (for hindcast systems). We also require users to designate

the number of iterations for bootstrapping.

User now has to designate number of iterations with

iterations=...inHindcastEnsemble.bootstrap()(GH#384, GH#436) Aaron Spring and Riley X. Brady.Make

metric,comparison,dim, andalignmentrequired (previous defaultNone) arguments forHindcastEnsemble.verify()(GH#384, GH#436) Aaron Spring and Riley X. Brady.Metric

_brier_scoreand_threshold_brier_score()now requires callable keyword argumentlogicalinstead offunc(GH#388) Aaron Spring.HindcastEnsemble.verify()does not correctdimautomatically tomemberfor probabilistic metrics. (GH#282, GH#407) Aaron Spring.Users can no longer add multiple observations to

HindcastEnsemble. This will make current and future development much easier on maintainers (GH#429, GH#453) Riley X. Brady.Standardize the names of the output coordinates for

PredictionEnsemble.verify()andPredictionEnsemble.bootstrap()toinitialized,uninitialized, andpersistence.initializedshowcases the metric result after comparing the initialized ensemble to the verification data;uninitializedwhen comparing the uninitialized (historical) ensemble to the verification data;persistenceis the evaluation of the persistence forecast (GH#460, GH#478, GH#476, GH#480) Aaron Spring.referencekeyword inHindcastEnsemble.verify()should be choosen from [uninitialized,persistence].historicalno longer works. (GH#460, GH#478, GH#476, GH#480) Aaron Spring.HindcastEnsemble.verify()returns noskilldimension ifreference=None(GH#480) Aaron Spring.comparisonis not applied to uninitialized skill inHindcastEnsemble.bootstrap(). (GH#352, GH#418) Aaron Spring.

New Features#

This release is accompanied by a bunch of new features. Math operations can now be used

with our PredictionEnsemble objects and their variables

can be sub-selected. Users can now quick plot time series forecasts with these objects.

Bootstrapping is available for HindcastEnsemble. Spatial

dimensions can be passed to metrics to do things like pattern correlation. New metrics

have been implemented based on Contingency tables. We now include an early version

of bias removal for HindcastEnsemble.

Use math operations like

+-*/withHindcastEnsembleandPerfectModelEnsemble. See demo Arithmetic-Operations-with-PredictionEnsemble-Objects. (GH#377) Aaron Spring.Subselect data variables from

PerfectModelEnsembleas fromxarray.Dataset:PredictionEnsemble[["var1", "var3"]](GH#409) Aaron Spring.Plot all datasets in

HindcastEnsembleorPerfectModelEnsemblebyPredictionEnsemble.plot()if no other spatial dimensions are present. (GH#383) Aaron Spring.Bootstrapping now available for

HindcastEnsembleasHindcastEnsemble.bootstrap(), which is analogous to thePerfectModelEnsemblemethod. (GH#257, GH#418) Aaron Spring.HindcastEnsemble.verify()allows all dimensions frominitializedensemble asdim. This allows e.g. spatial dimensions to be used for pattern correlation. Make sure to useskipna=Truewhen using spatial dimensions and output has NaNs (in the case of land, for instance). (GH#282, GH#407) Aaron Spring.Allow binary forecasts at when calling

HindcastEnsemble.verify(), rather than needing to supply binary results beforehand. In other words,hindcast.verify(metric='bs', comparison='m2o', dim='member', logical=logical)is now the same ashindcast.map(logical).verify(metric='brier_score', comparison='m2o', dim='member'. (GH#431) Aaron Spring.Check

calendartypes when usingHindcastEnsemble.add_observations(),HindcastEnsemble.add_uninitialized(),PerfectModelEnsemble.add_control()to ensure that the verification data calendars match that of the initialized ensemble. (GH#300, GH#452, GH#422, GH#462) Riley X. Brady and Aaron Spring.Implement new metrics which have been ported over from https://github.com/csiro-dcfp/doppyo/ to

xskillscoreby Dougie Squire. (GH#439, GH#456) Aaron Springrank histogram

_rank_histogram()discrimination

_discrimination()reliability

_reliability()ranked probability score

_rps()contingency table and related scores

_contingency()

Perfect Model

PerfectModelEnsemble.verify()no longer requirescontrolinPerfectModelEnsemble. It is only required whenreference=['persistence']. (GH#461) Aaron Spring.Implemented bias removal

remove_bias.remove_bias(how='mean')removes the mean bias of initialized hindcasts with respect to observations. See example. (GH#389, GH#443, GH#459) Aaron Spring and Riley X. Brady.

Deprecated#

spatial_smoothing_xrcoarsenno longer used for spatial smoothing. (GH#391) Aaron Spring.compute_metric,compute_uninitializedandcompute_persistenceno longer in use forPerfectModelEnsemblein favor ofPerfectModelEnsemble.verify()with thereferencekeyword instead. (GH#436, GH#468, GH#472) Aaron Spring and Riley X. Brady.'historical'no longer a valid choice forreference. Use'uninitialized'instead. (GH#478) Aaron Spring.

Bug Fixes#

PredictionEnsemble.verify()andPredictionEnsemble.bootstrap()now acceptmetric_kwargs. (GH#387) Aaron Spring.PerfectModelEnsemble.verify()now accepts'uninitialized'as a reference. (GH#395) Riley X. Brady.Spatial and temporal smoothing

PredictionEnsemble.smooth()now work as expected and rename time dimensions afterverify(). (GH#391) Aaron Spring.PredictionEnsemble.verify(comparison='m2o', references=['uninitialized', 'persistence']does not fail anymore. (GH#385, GH#400) Aaron Spring.Remove bias using

dayofyearinHindcastEnsemble.reduce_bias(). (GH#443) Aaron Spring.climpredworks withdask=>2.28. (GH#479, GH#482) Aaron Spring.

Documentation#

Updates

climpredtagline to “Verification of weather and climate forecasts.” (GH#420) Riley X. Brady.Adds section on how to use arithmetic with

HindcastEnsemble. (GH#378) Riley X. Brady.Add docs section for similar open-source forecasting packages. (GH#432) Riley X. Brady.

Add all metrics to main API in addition to metrics page. (GH#438) Riley X. Brady.

Add page on bias removal Aaron Spring.

Internals/Minor Fixes#

PredictionEnsemble.verify()replaces deprecatedPerfectModelEnsemble.compute_metric()and acceptsreferenceas keyword. (GH#387) Aaron Spring.Cleared out unnecessary statistics functions from

climpredand migrated them toesmtools. Addesmtoolsas a required package. (GH#395) Riley X. Brady.Remove fixed pandas dependency from

pandas=0.25to stablepandas. (GH#402, GH#403) Aaron Spring.dimis expected to be a list of strings incompute_perfect_model()andcompute_hindcast(). (GH#282, GH#407) Aaron Spring.Update

cartopyrequirement to 0.0.18 or greater to release lock onmatplotlibversion. Updatexskillscorerequirement to 0.0.18 to cooperate with newxarrayversion. (GH#451, GH#449) Riley X. BradySwitch from Travis CI and Coveralls to Github Actions and CodeCov. (GH#471) Riley X. Brady

Assertion functions added for

PerfectModelEnsemble:assert_PredictionEnsemble(). (GH#391) Aaron Spring.Test all metrics against synthetic data. (GH#388) Aaron Spring.

climpred v2.1.0 (2020-06-08)#

Breaking Changes#

Keyword

bootstraphas been replaced withiterations. We feel that this more accurately describes the argument, since “bootstrap” is really the process as a whole. (GH#354) Aaron Spring.

New Features#

HindcastEnsembleandPerfectModelEnsemblenow use an HTML representation, following the more recent versions ofxarray. (GH#371) Aaron Spring.HindcastEnsemble.verify()now takesreference=...keyword. Current options are'persistence'for a persistence forecast of the observations and'uninitialized'for an uninitialized/historical reference, such as an uninitialized/forced run. (GH#341) Riley X. Brady.We now only enforce a union of the initialization dates with observations if

reference='persistence'forHindcastEnsemble. This is to ensure that the same set of initializations is used by the observations to construct a persistence forecast. (GH#341) Riley X. Brady.compute_perfect_model()now accepts initialization (init) ascftimeandint.cftimeis now implemented into the bootstrap uninitialized functions for the perfect model configuration. (GH#332) Aaron Spring.New explicit keywords in bootstrap functions for

resampling_dimandreference_compute(GH#320) Aaron Spring.Logging now included for

compute_hindcastwhich displays theinitsand verification dates used at each lead (GH#324) Aaron Spring, (GH#338) Riley X. Brady. See (logging).New explicit keywords added for

alignmentof verification dates and initializations. (GH#324) Aaron Spring. See (alignment)'maximize': Maximize the degrees of freedom by slicinghindandverifto a common time frame at each lead. (GH#338) Riley X. Brady.'same_inits': slice to a common init frame prior to computing metric. This philosophy follows the thought that each lead should be based on the same set of initializations. (GH#328) Riley X. Brady.'same_verifs': slice to a common/consistent verification time frame prior to computing metric. This philosophy follows the thought that each lead should be based on the same set of verification dates. (GH#331) Riley X. Brady.

Performance#

The major change for this release is a dramatic speedup in bootstrapping functions, led

by Aaron Spring. We focused on scalability with dask and found many places we

could compute skill simultaneously over all bootstrapped ensemble members rather than

at each iteration.

Bootstrapping uninitialized skill in the perfect model framework is now sped up significantly for annual lead resolution. (GH#332) Aaron Spring.

General speedup in

bootstrap_hindcast()andbootstrap_perfect_model(): (GH#285) Aaron Spring.Properly implemented handling for lazy results when inputs are chunked.

User gets warned when chunking potentially unnecessarily and/or inefficiently.

Bug Fixes#

Alignment options now account for differences in the historical time series if

reference='historical'. (GH#341) Riley X. Brady.

Internals/Minor Fixes#

Added a Code of Conduct (GH#285) Aaron Spring.

Gather

pytest.fixture in ``conftest.py. (GH#313) Aaron Spring.Move

x_METRICSandCOMPARISONStometrics.pyandcomparisons.pyin order to avoid circular import dependencies. (GH#315) Aaron Spring.asvbenchmarks added forHindcastEnsemble(GH#285) Aaron Spring.Ignore irrelevant warnings in

pytestand mark slow tests (GH#333) Aaron Spring.Default

CONCAT_KWARGSnow in allxr.concatto speed up bootstrapping. (GH#330) Aaron Spring.Remove

membercoords form2ccomparison for probabilistic metrics. (GH#330) Aaron Spring.Refactored

compute_hindcast()andcompute_perfect_model(). (GH#330) Aaron Spring.Changed lead0 coordinate modifications to be compliant with

xarray=0.15.1incompute_persistence(). (GH#348) Aaron Spring.Exchanged

my_quantilewithxr.quantile(skipna=False). (GH#348) Aaron Spring.Remove

sigfromplot_bootstrapped_skill_over_leadyear(). (GH#351) Aaron Spring.Require

xskillscore v0.0.15and use their functions for effective sample size-based metrics. (:pr: 353) Riley X. Brady.Faster bootstrapping without replacement used in threshold functions of

climpred.stats(GH#354) Aaron Spring.Require

cftime v1.1.2, which modifies their object handling to create 200-400x speedups in some basic operations. (GH#356) Riley X. Brady.Resample first and then calculate skill in

bootstrap_perfect_model()andbootstrap_hindcast()(GH#355) Aaron Spring.

Documentation#

Added demo to setup your own raw model output compliant to

climpred(GH#296) Aaron Spring. See (here).Added demo using

intake-esmwithclimpred. See demo. (GH#296) Aaron Spring.Added Verification Alignment page explaining how initializations are selected and aligned with verification data. (GH#328) Riley X. Brady. See (here).

climpred v2.0.0 (2020-01-22)#

New Features#

Add support for

days,pentads,weeks,months,seasonsfor lead time resolution.climprednow requires aleadattribute “units” to decipher what resolution the predictions are at. (GH#294) Kathy Pegion and Riley X. Brady.

HindcastEnsemblenow hasHindcastEnsemble.add_observations()andHindcastEnsemble.get_observations()methods. These are the same as.add_reference()and.get_reference(), which will be deprecated eventually. The name change clears up confusion, since “reference” is the appropriate name for a reference forecast, e.g."persistence". (GH#310) Riley X. Brady.HindcastEnsemblenow has.verify()function, which duplicates the.compute_metric()function. We feel that.verify()is more clear and easy to write, and follows the terminology of the field. (GH#310) Riley X. Brady.e2oandm2oare now the preferred keywords for comparing hindcast ensemble means and ensemble members to verification data, respectively. (GH#310) Riley X. Brady.

Documentation#

New example pages for subseasonal-to-seasonal prediction using

climpred. (GH#294) Kathy PegionComparisons page rewritten for more clarity. (GH#310) Riley X. Brady.

Bug Fixes#

Fixed m2m broken comparison issue and removed correction. (GH#290) Aaron Spring.

Internals/Minor Fixes#

Updates to

xskillscorev0.0.12 to get a 30-50% speedup in compute functions that rely on metrics from there. (GH#309) Riley X. Brady.Stacking dims is handled by

comparisons, no need for internal keywordstack_dims. Thereforecomparisonnow takesmetricas argument instead. (GH#290) Aaron Spring.assign_attrsnow carries dim (GH#290) Aaron Spring.referencechanged toverifthroughout hindcast compute functions. This is more clear, sincereferenceusually refers to a type of forecast, such as persistence. (GH#310) Riley X. Brady.Comparisonobjects can now have aliases. (GH#310) Riley X. Brady.

climpred v1.2.1 (2020-01-07)#

Depreciated#

madno longer a keyword for the median absolute error metric. Users should now usemedian_absolute_error, which is identical to changes inxskillscoreversion 0.0.10. (GH#283) Riley X. Bradypaccno longer a keyword for the p value associated with the Pearson product-moment correlation, since it is used by the correlation coefficient. (GH#283) Riley X. Bradymsssno longer a keyword for the Murphy’s MSSS, since it is reserved for the standard MSSS. (GH#283) Riley X. Brady

New Features#

Metrics

pearson_r_eff_p_valueandspearman_r_eff_p_valueaccount for autocorrelation in computing p values. (GH#283) Riley X. BradyMetric

effective_sample_sizecomputes number of independent samples between two time series being correlated. (GH#283) Riley X. BradyAdded keywords for metrics: (GH#283) Riley X. Brady

'pval'forpearson_r_p_value['n_eff', 'eff_n']foreffective_sample_size['p_pval_eff', 'pvalue_eff', 'pval_eff']forpearson_r_eff_p_value['spvalue', 'spval']forspearman_r_p_value['s_pval_eff', 'spvalue_eff', 'spval_eff']forspearman_r_eff_p_value'nev'fornmse

Internals/Minor Fixes#

climprednow requiresxarrayversion 0.14.1 so that thedrop_vars()keyword used in our package does not throw an error. (GH#276) Riley X. BradyUpdate to

xskillscoreversion 0.0.10 to fix errors in weighted metrics with pairwise NaNs. (GH#283) Riley X. Bradydoc8added topre-committo have consistent formatting on.rstfiles. (GH#283) Riley X. BradyRemove

properattribute onMetricclass since it isn’t used anywhere. (GH#283) Riley X. BradyAdd testing for effective p values. (GH#283) Riley X. Brady

Add testing for whether metric aliases are repeated/overwrite each other. (GH#283) Riley X. Brady

pppchanged tomsess, but keywords allow forpppandmsssstill. (GH#283) Riley X. Brady

Documentation#

Expansion of metrics documentation with much more detail on how metrics are computed, their keywords, references, min/max/perfect scores, etc. (GH#283) Riley X. Brady

Update terminology page with more information on metrics terminology. (GH#283) Riley X. Brady

climpred v1.2.0 (2019-12-17)#

Depreciated#

Abbreviation

pvaldepreciated. Usep_pvalforpearson_r_p_valueinstead. (GH#264) Aaron Spring.

New Features#

Users can now pass a custom

metricorcomparisonto compute functions. (GH#268) Aaron Spring.New deterministic metrics (see metrics). (GH#264) Aaron Spring.

Spearman ranked correlation (spearman_r)

Spearman ranked correlation p-value (spearman_r_p_value)

Mean Absolute Deviation (mad)

Mean Absolute Percent Error (mape)

Symmetric Mean Absolute Percent Error (smape)

Users can now apply arbitrary

xarraymethods toHindcastEnsembleandPerfectModelEnsemble. (GH#243) Riley X. Brady.Add “getter” methods to

HindcastEnsembleandPerfectModelEnsembleto retrievexarraydatasets from the objects. (GH#243) Riley X. Brady.

>>> hind = climpred.tutorial.load_dataset("CESM-DP-SST")

>>> ref = climpred.tutorial.load_dataset("ERSST")

>>> hindcast = climpred.HindcastEnsemble(hind)

>>> hindcast = hindcast.add_reference(ref, "ERSST")

>>> print(hindcast)

<climpred.HindcastEnsemble>

Initialized Ensemble:

SST (init, lead, member) float64 ...

ERSST:

SST (time) float32 ...

Uninitialized:

None

>>> print(hindcast.get_initialized())

<xarray.Dataset>

Dimensions: (init: 64, lead: 10, member: 10)

Coordinates:

* lead (lead) int32 1 2 3 4 5 6 7 8 9 10

* member (member) int32 1 2 3 4 5 6 7 8 9 10

* init (init) float32 1954.0 1955.0 1956.0 1957.0 ... 2015.0 2016.0 2017.0

Data variables:

SST (init, lead, member) float64 ...

>>> print(hindcast.get_reference("ERSST"))

<xarray.Dataset>

Dimensions: (time: 61)

Coordinates:

* time (time) int64 1955 1956 1957 1958 1959 ... 2011 2012 2013 2014 2015

Data variables:

SST (time) float32 ...

metric_kwargscan be passed toMetric. (GH#264) Aaron Spring.See

metric_kwargsunder metrics.

Bug Fixes#

HindcastEnsemble.compute_metric()doesn’t drop coordinates from the initialized hindcast ensemble anymore. (GH#258) Aaron Spring.Metric

uaccdoes not crash whenpppnegative anymore. (GH#264) Aaron Spring.Update

xskillscoreto version 0.0.9 to fix all-NaN issue withpearson_randpearson_r_p_valuewhen there’s missing data. (GH#269) Riley X. Brady.

Internals/Minor Fixes#

Rewrote

varweighted_mean_period()based onxrft. Changedtime_dimtodim. Function no longer drops coordinates. (GH#258) Aaron SpringAdd

dim='time'indpp(). (GH#258) Aaron SpringComparisons

m2m,m2erewritten to not stack dims into supervector because this is now done inxskillscore. (GH#264) Aaron SpringAdd

tqdmprogress bar tobootstrap_compute(). (GH#244) Aaron SpringRemove inplace behavior for

HindcastEnsembleandPerfectModelEnsemble. (GH#243) Riley X. BradyAdded tests for chunking with

dask. (GH#258) Aaron SpringFix test issues with esmpy 8.0 by forcing esmpy 7.1 (GH#269). Riley X. Brady

Rewrote

metricsandcomparisonsas classes to accomodate custom metrics and comparisons. (GH#268) Aaron Spring

Documentation#

Add examples notebook for temporal and spatial smoothing. (GH#244) Aaron Spring

Add documentation for computing a metric over a specified dimension. (GH#244) Aaron Spring

Update API to be more organized with individual function/class pages. (GH#243) Riley X. Brady.

Add page describing the

HindcastEnsembleandPerfectModelEnsembleobjects more clearly. (GH#243) Riley X. BradyAdd page for publications and helpful links. (GH#270) Riley X. Brady.

climpred v1.1.0 (2019-09-23)#

Features#

Write information about skill computation to netcdf attributes(GH#213) Aaron Spring

Temporal and spatial smoothing module (GH#224) Aaron Spring

Add metrics brier_score, threshold_brier_score and crpss_es (GH#232) Aaron Spring

Allow compute_hindcast and compute_perfect_model to specify which dimension dim to calculate metric over (GH#232) Aaron Spring

Bug Fixes#

Correct implementation of probabilistic metrics from xskillscore in compute_perfect_model, bootstrap_perfect_model, compute_hindcast and bootstrap_hindcast, now requires xskillscore>=0.05 (GH#232) Aaron Spring

Internals/Minor Fixes#

Rename .stats.DPP to dpp (GH#232) Aaron Spring

Add matplotlib as a main dependency so that a direct pip installation works (GH#211) Riley X. Brady.

climpredis now installable from conda-forge (GH#212) Riley X. Brady.Fix erroneous descriptions of sample datasets (GH#226) Riley X. Brady.

Benchmarking time and peak memory of compute functions with asv (GH#231) Aaron Spring

Documentation#

Add scope of package to docs for clarity for users and developers. (GH#235) Riley X. Brady.

climpred v1.0.1 (2019-07-04)#

Bug Fixes#

Accomodate for lead-zero within the

leaddimension (GH#196) Riley X. Brady.Fix issue with adding uninitialized ensemble to

HindcastEnsembleobject (GH#199) Riley X. Brady.Allow

max_dofkeyword to be passed tocompute_metricandcompute_persistenceforHindcastEnsemble. (GH#199) Riley X. Brady.

Internals/Minor Fixes#

Force

xskillscoreversion 0.0.4 or higher to avoidImportError(GH#204) Riley X. Brady.Change

max_dfskeyword tomax_dof(GH#199) Riley X. Brady.Add tests for

HindcastEnsembleandPerfectModelEnsemble. (GH#199) Riley X. Brady

climpred v1.0.0 (2019-07-03)#

climpred v1.0.0 represents the first stable release of the package. It includes

HindcastEnsemble and PerfectModelEnsemble objects to

perform analysis with.

It offers a suite of deterministic and probabilistic metrics that are optimized to be

run on single time series or grids of data (e.g., lat, lon, and depth). Currently,

climpred only supports annual forecasts.

Features#

Bootstrap prediction skill based on resampling with replacement consistently in

ReferenceEnsembleandPerfectModelEnsemble. (GH#128) Aaron SpringConsistent bootstrap function for

climpred.statsfunctions viabootstrap_funcwrapper. (GH#167) Aaron Springmany more metrics:

_msss_murphy,_lessand probabilistic_crps,_crpss(GH#128) Aaron Spring

Bug Fixes#

compute_uninitializednow trims input data to the same time window. (GH#193) Riley X. Bradyrm_polynow properly interpolates/fills NaNs. (GH#192) Riley X. Brady

Internals/Minor Fixes#

The

climpredversion can be printed. (GH#195) Riley X. BradyConstants are made elegant and pushed to a separate module. (GH#184) Andrew Huang

Checks are consolidated to their own module. (GH#173) Andrew Huang

Documentation#

Documentation built extensively in multiple PRs.

climpred v0.3 (2019-04-27)#

climpred v0.3 really represents the entire development phase leading up to the

version 1 release. This was done in collaboration between Riley X. Brady,

Aaron Spring, and Andrew Huang. Future releases will have less additions.

Features#

Introduces object-oriented system to

climpred, with classesReferenceEnsembleandPerfectModelEnsemble. (GH#86) Riley X. BradyExpands bootstrapping module for perfect-module configurations. (GH#78, GH#87) Aaron Spring

Adds functions for computing Relative Entropy (GH#73) Aaron Spring

Sets more intelligible dimension expectations for

climpred(GH#98, GH#105) Riley X. Brady and Aaron Spring:init: initialization dates for the prediction ensemblelead: retrospective forecasts from prediction ensemble; returned dimension for prediction calculationstime: time dimension for control runs, references, etc.member: ensemble member dimension.

Updates

open_datasetto display available dataset names when no argument is passed. (GH#123) Riley X. BradyChange

ReferenceEnsembletoHindcastEnsemble. (GH#124) Riley X. BradyAdd probabilistic metrics to

climpred. (GH#128) Aaron SpringConsolidate separate perfect-model and hindcast functions into singular functions (GH#128) Aaron Spring

Add option to pass proxy through to

open_datasetfor firewalled networks. (GH#138) Riley X. Brady

Bug Fixes#

xr_rm_polycan now operate on Datasets and with multiple variables. It also interpolates across NaNs in time series. (GH#94) Andrew HuangTravis CI,

treon, andpytestall run for automated testing of new features. (GH#98, GH#105, GH#106) Riley X. Brady and Aaron SpringClean up

check_xarraydecorators and make sure that they work. (GH#142) Andrew HuangEnsures that

help()returns proper docstring even with decorators. (GH#149) Andrew HuangFixes bootstrap so p values are correct. (GH#170) Aaron Spring

Internals/Minor Fixes#

Adds unit testing for all perfect-model comparisons. (GH#107) Aaron Spring

Updates CESM-LE uninitialized ensemble sample data to have 34 members. (GH#113) Riley X. Brady

Adds MPI-ESM hindcast, historical, and assimilation sample data. (GH#119) Aaron Spring

Replaces

check_xarraywith a decorator for checking that input arguments are xarray objects. (GH#120) Andrew HuangAdd custom exceptions for clearer error reporting. (GH#139) Riley X. Brady

Remove “xr” prefix from stats module. (GH#144) Riley X. Brady

Add codecoverage for testing. (GH#152) Riley X. Brady

Update exception messages for more pretty error reporting. (GH#156) Andrew Huang

Add

pre-commitandflake8/blackcheck in CI. (GH#163) Riley X. BradyChange

loadutilsmodule totutorialandopen_datasettoload_dataset. (GH#164) Riley X. BradyRemove predictability horizon function to revisit for v2. (GH#165) Riley X. Brady

Increase code coverage through more testing. (GH#167) Aaron Spring

Consolidates checks and constants into modules. (GH#173) Andrew Huang

climpred v0.2 (2019-01-11)#

Name changed to climpred, developed enough for basic decadal prediction tasks on a

perfect-model ensemble and reference-based ensemble.

climpred v0.1 (2018-12-20)#

Collaboration between Riley Brady and Aaron Spring begins.