climpred.metrics._crpss¶

-

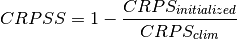

climpred.metrics._crpss(forecast, verif, dim=None, **metric_kwargs)[source]¶ Continuous Ranked Probability Skill Score.

This can be used to assess whether the ensemble spread is a useful measure for the forecast uncertainty by comparing the CRPS of the ensemble forecast to that of a reference forecast with the desired spread.

Note

When assuming a Gaussian distribution of forecasts, use default

gaussian=True. If not gaussian, you may specify the distribution type, xmin/xmax/tolerance for integration (seecrps_quadrature()).- Parameters

forecast (xr.object) – Forecast with

memberdim.verif (xr.object) – Verification data without

memberdim.dim (list of str) – Dimension to apply metric over. Expects at least member. Other dimensions are passed to xskillscore and averaged.

metric_kwargs (dict) –

optional gaussian (bool, optional): If

True, assume Gaussian distribution forbaseline skill. Defaults to

True.

- Details:

minimum

-∞

maximum

1.0

perfect

1.0

orientation

positive

better than climatology

> 0.0

worse than climatology

< 0.0

- Reference:

Matheson, James E., and Robert L. Winkler. “Scoring Rules for Continuous Probability Distributions.” Management Science 22, no. 10 (June 1, 1976): 1087–96. https://doi.org/10/cwwt4g.

Gneiting, Tilmann, and Adrian E Raftery. “Strictly Proper Scoring Rules, Prediction, and Estimation.” Journal of the American Statistical Association 102, no. 477 (March 1, 2007): 359–78. https://doi.org/10/c6758w.

Example

>>> hindcast.verify(metric='crpss', comparison='m2o', alignment='same_verifs', dim='member') >>> perfect_model.verify(metric='crpss', comparison='m2m', dim='member', gaussian=False, cdf_or_dist=scipy.stats.norm, xminimum=-10, xmaximum=10, tol=1e-6)

See also

crps_ensemble()